Adobe is currently pushing its Firefly system against OpenAIs DALL-E system for AI-generated images. Both systems have more or less the same technological framework behind them, but differ in how their image training data was compiled.

I was curious what the same prompt would produce in both systems… and of course, which results I would like more?

The comparison between Firefly and DALL-E was not as straightforward as I initially thought. As Firefly got curated training data, compared with DALL-E which uses annotated data from web scrapping, I thought Firefly would produce the better results.

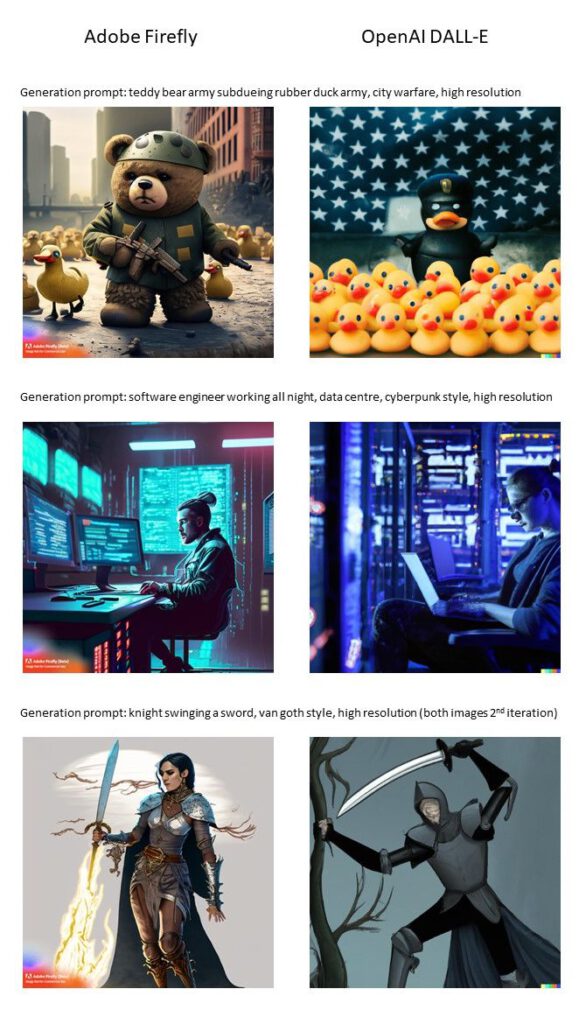

Here are my test prompts and their best AI-generated image from the four images, each system presented as result for the prompt.

My highlight was the armed teddy bear 🧸 from Firefly, while DALL-E insisted on providing me a rubber duck instead, thus misinterpreting my prompt. The software engineer prompt was interpreted in both systems equally well, and for request to generate a knight in Van Goghs style (yes, spelling error in the prompt, mea culpa) I liked DALL-E more than Firefly.

Have fun trying your own prompts and looking forward to hear from you on 🚀 LinkedIn 🔔!